The National Centre for Computer Animation has been at the forefront of computer graphics and animation education and research in the UK since 1989.

In 2011, the Centre was presented with the Queen’s Anniversary Award for “World-class computer animation teaching with wide scientific and creative applications” and its contribution to world-leading excellence and pioneering development in the teaching of computer animation. The Queen’s Anniversary Prizes form part of the national honours system and are the most prestigious awards in UK education.

The aim of the NCCA is to promote science in the service of the arts and to embed a theoretical underpinning within a contemporary professional setting, allowing graduates to leave BU with highly sought-after skills in the computer animation, visual effects and computer games industries.

Our courses

Undergraduate courses

Postgraduate courses

Industry links and accreditations

We have forged links with many of the UK’s, and the world’s, most prominent animation organisations and award-winning professionals. BU is also one of a small number of institutions from around the world who have been granted Houdini Certified School status by Side Effects Software, and a number of our computer animation degrees boast the ScreenSkills Tick, indicating the quality of those courses. Find out more about our accreditations and industry links.

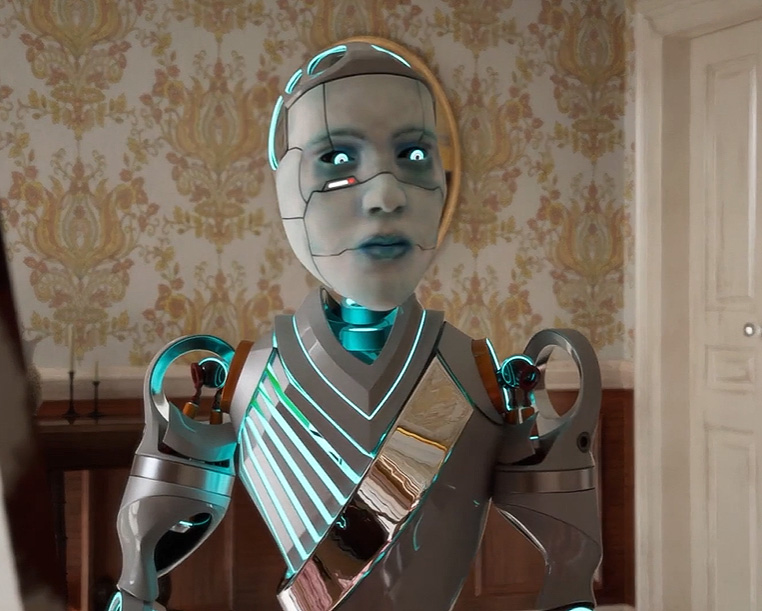

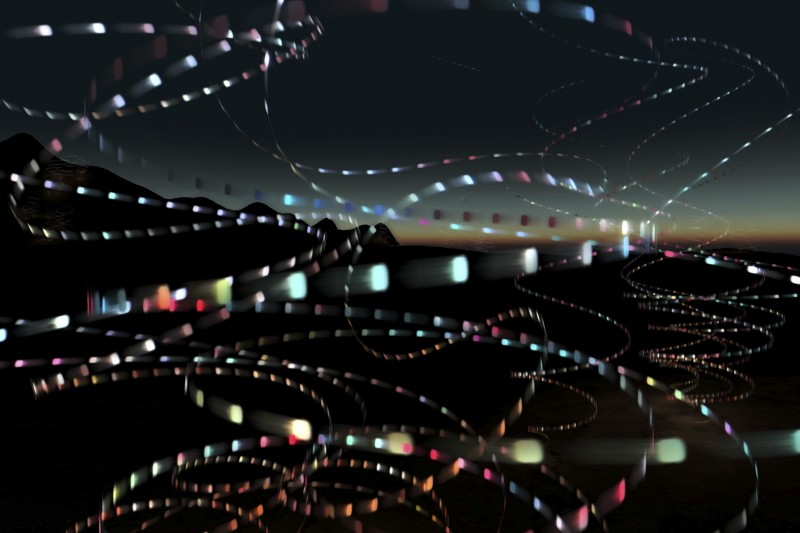

Industry-standard facilities

Our facilities on Talbot Campus can be found in Poole Gateway Building, a modern development that opened in spring 2020. This houses a range of industry-standard equipment and technology to support our students’ studies, including a green screen studio, virtual production facilities and computer labs equipped with professional production software. Take a look at our gallery or explore our virtual tour to find out more.

NCCA degree shows

The NCCA annual degree shows provide an opportunity for final year Undergraduate students and Master's students to showcase their work to industry professionals and potential employers. View the 2022 Undergraduate degree show booklet.

BFX Festival

The annual BFX Festival is one of the largest visual effects, animation and games festivals in the UK, bringing together professionals and students from across the world to share insights into how the best films, commercials, television shows and games are made.

Once Upon a Time in Animation

Celebrating 30 years of the National Centre for Computer Animation, the Once Upon a Time in Animation exhibition at Poole Museum provided an overview of 30 years of excellence in animation research, practice and innovation at Bournemouth University's National Centre for Computer Animation (NCCA).

Featuring a wealth of creative talent from students, graduates and researchers from BU, the exhibition explored different areas of animation, from film to game design, and includes household names such as Miffy, Beatrix Potter and Aardman.

Research

The Research Excellence Framework (REF) is the UK’s system for assessing the quality of research. Universities are scored across three elements – the quality of outputs (e.g. publications, performances, and exhibitions), their impact beyond academia, and the environment that supports research.

For REF 2021, the NCCA contributed to Bournemouth University's submission under Unit of Assessment 32 (UoA32): Art and Design: History, Practice and Theory. We submitted three impact case studies, which included projects using art to communicate scientific concepts, improving wellbeing through participatory art, and improving character development techniques in the animation sector.

- 66.3% of our research outputs (UoA32) were assessed to be internationally excellent in terms of originality, significance and rigour, or above

- 100% of our research environment was scored as conducive to producing research of internationally excellent quality and enabling very considerable impact, with 72.5% of that classed as conducive to world-leading research quality and outstanding impact

- 83.3% of our impact case studies were found to have very considerable impact in terms of their reach and significance.

Find out more about our REF submission